- Resources

- /

- Changelog

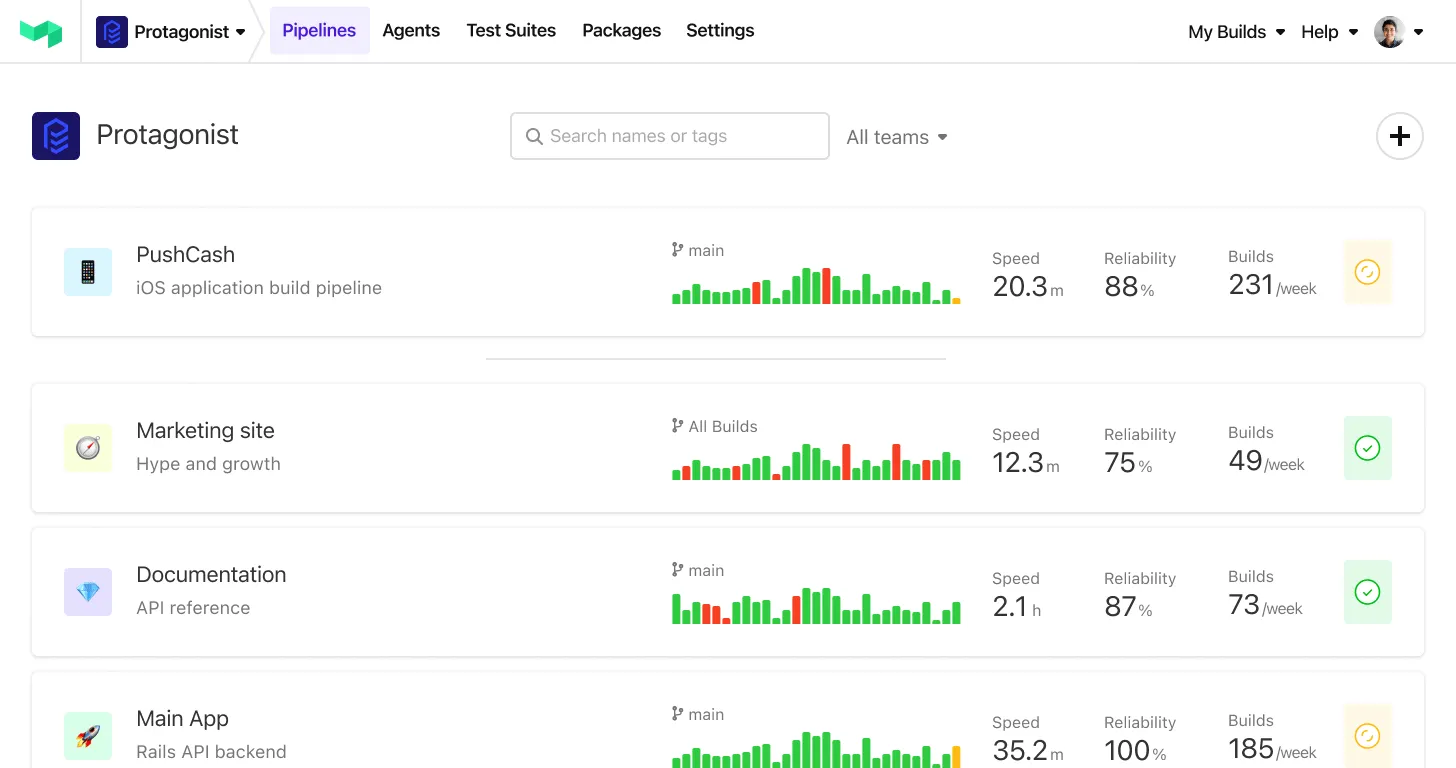

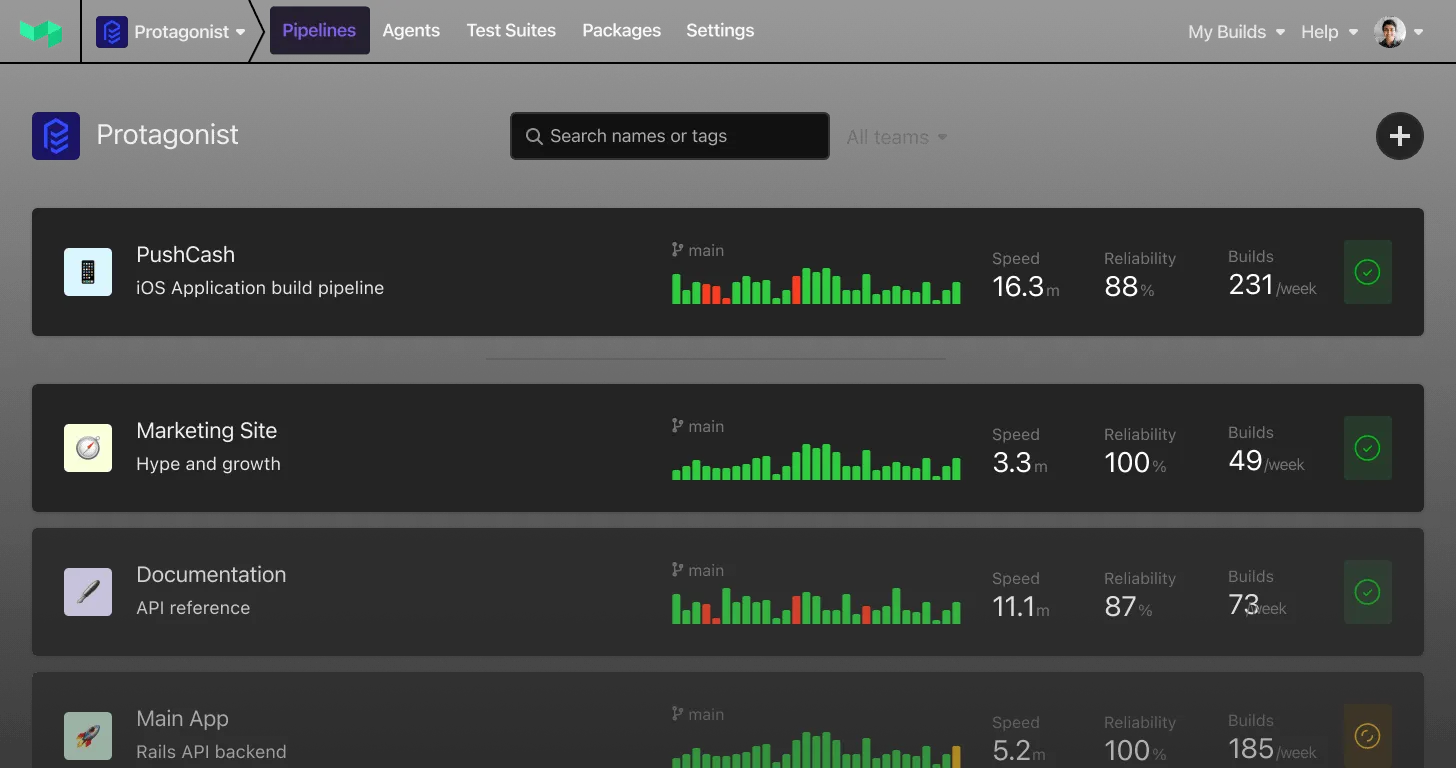

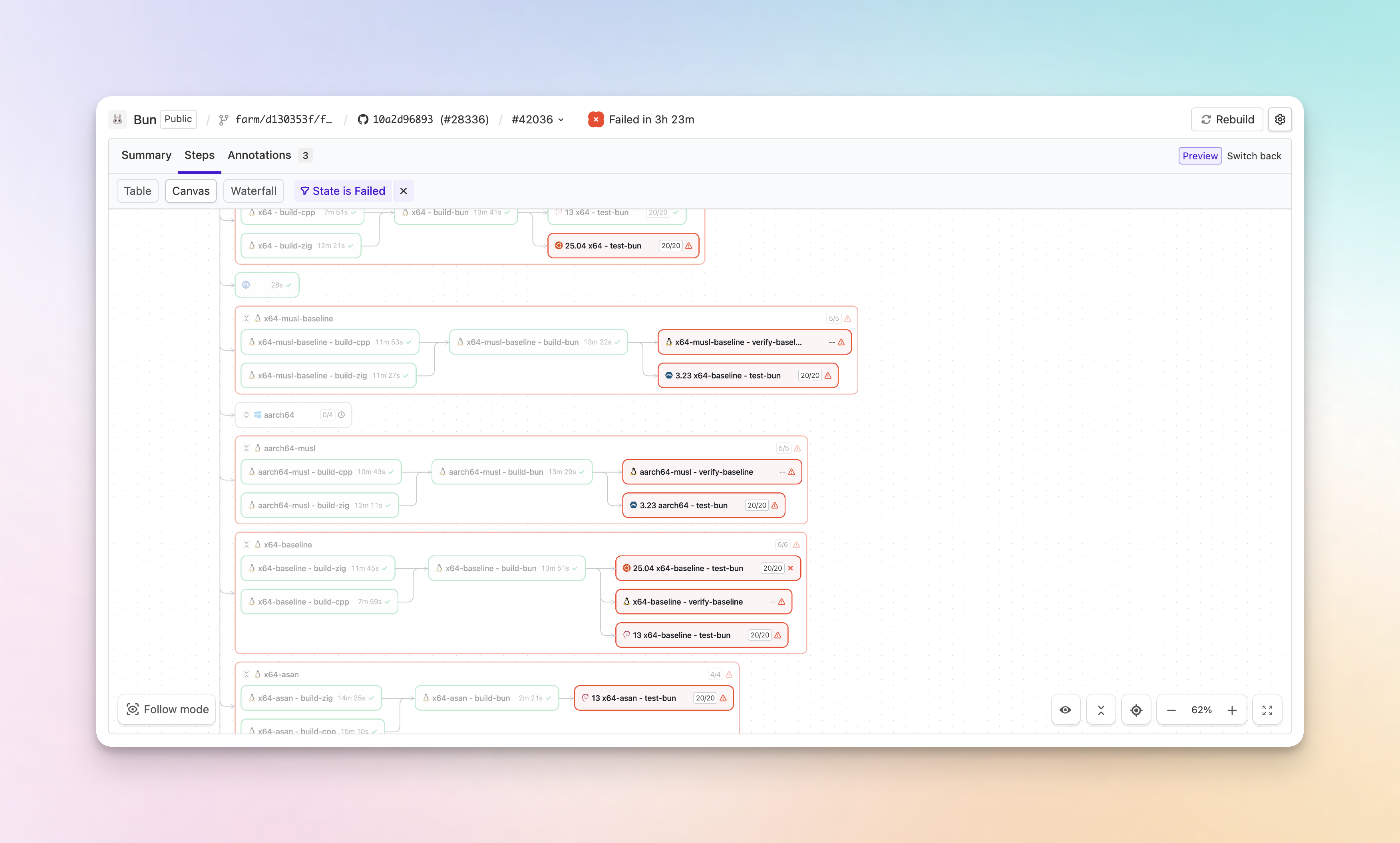

A simpler build page layout with a new list view

Finding the job you care about on a busy build just got faster. The build page has a cleaner layout, a proper list view in place of the old sidebar, and less duplicated information getting between you and what you're actually trying to inspect.

- Find the job faster: Jobs are scannable at a glance, with room to breathe. Long step names are finally readable instead of truncated into uselessness.

- A cleaner layout: Pipeline and jobs on the left, the detail you're inspecting on the right. Less hunting, more space for the thing you care about.

- Works on your phone: The list view is fully responsive, so checking in on a cheeky build while you're AFK is no longer a pinch-and-zoom ordeal.

- Filter jobs by state: Something the old sidebar couldn't do at all. Find that one scheduled step inside a running group without scrolling.

- Groups stay grouped: When you filter or group by state, parallel, matrix and group steps keep their parent visible instead of flattening, so you never lose the context of where a job belongs.

- Less duplication: Repeated headers and metadata are gone, so the information you need stands out.

- Jump to anything, from anywhere: Search straight to a job or step from any view — including the canvas.

Available now for everyone on the new build page — turn it on from your user settings if you haven't already. Tell us what you think at support@buildkite.com.

Chris

OAuth Token Exchange: short-lived API tokens from your identity provider

Buildkite now supports OAuth 2.0 Token Exchange (RFC 8693), letting you mint short-lived Buildkite API tokens on behalf of your user directly from your identity provider (IdP). Instead of managing long-lived API tokens, your identity provider tooling can exchange a signed JWT for a scoped, time-limited Buildkite token — no secrets to store, rotate, or worry about leaking.

How it works

First, set things up once: generate a keypair in your infrastructure, keep the private key with your tooling for signing JWTs, and publish the public key on a JWKS (JSON Web Key Set) host. Then register a token exchange application in your Buildkite organization settings, point it at your JWKS (inline or via URI), and configure the grantable scopes, maximum TTL, and any IP restrictions for minted tokens.

From there, each token exchange follows three steps:

- Your tooling signs a JWT with its private key and sends it to Buildkite's

/oauth/tokenendpoint. - Buildkite fetches your JWKS to verify the JWT's signature against your published public key.

- Buildkite mints a short-lived

bktx_token scoped within the limits of your token exchange application and returns it to your tooling. Every mint is recorded in the audit log against the application, user, and key ID.

curl -X POST https://api.buildkite.com/v2/oauth/token \

-d grant_type=urn:ietf:params:oauth:grant-type:token-exchange \

-d client_assertion_type=urn:ietf:params:oauth:client-assertion-type:jwt-bearer \

-d client_assertion=$SIGNED_JWT \

-d audience=your-org-slug \

-d subject_token=$USER_EMAIL \

-d subject_token_type=urn<img class="emoji not-prose size-4 inline align-[-0.1em]" title="buildkite" alt=":buildkite:" src="https://buildkiteassets.com/emojis/img-buildkite-64/buildkite.png" draggable="false" />params:oauth:token-type:user-email \

-d scope="read_builds read_pipelines"Key details

- Short-lived by design: Tokens expire automatically based on the TTL you configure (or request per-exchange), so leaked credentials are already expired.

- Scoped access: Each token exchange application defines grantable and default scopes, and callers can request a subset per token.

- IP restrictions: Lock down token exchange requests and minted tokens to specific source IP ranges.

- Full audit trail: Every token minted is logged as an audit event, tied to the token exchange application, user, and public key ID.

- JWT private key rotation support through JWKS URI: Point to your IdP's hosted JWKS endpoint and Buildkite will fetch and cache public keys associated with your JWT private key automatically, including support for key rotation.

- Rate limited: Organization-level rate limiting protects against abuse.

OAuth Token Exchange is available on Enterprise plans. To get started, see the OAuth Token Exchange docs, visit your organization's settings, or reach out to your account team.

Sorcha

Official Buildkite skills for AI coding agents

Buildkite skills are now available for Claude Code, Cursor, and other AI coding agents. Skills are short, agent-readable guides that teach an agent how to use Buildkite: pipeline conventions, the shape of our APIs, and the patterns we recommend for things like dynamic pipelines, OIDC federation, and CI migrations.

Install them with one command:

npx skills add buildkite/skillsSkills sit alongside the docs rather than replacing them. Where docs explain what a Buildkite feature does, a skill teaches an agent how to use it well: recommended patterns, common mistakes, and the judgement calls an experienced user makes.

What's in the repo today

- buildkite-pipelines: designing a pipeline, tuning caching, debugging builds, generating dynamic steps, and running matrix builds

- buildkite-agent-runtime: annotating builds, uploading and fetching artifacts, passing data between steps, uploading dynamic steps from inside a job, and federating with cloud providers via OIDC

- buildkite-cli: triggering and inspecting builds from your terminal, managing pipelines and secrets locally, and scripting against Buildkite

- buildkite-api: automating Buildkite from your own systems, building integrations, querying build data at scale, and reacting to events with webhooks

- buildkite-migration: planning a move to Buildkite from GitHub Actions, Jenkins, CircleCI, Bitbucket Pipelines, or GitLab CI, and converting existing pipeline YAML

- buildkite-preflight: validating local pipeline changes against real CI before you push

Contributing

The repo welcomes contributions: patterns from your own pipelines, gotchas you've hit in production, and corrections to anything wrong or out of date. See github.com/buildkite/skills for installation instructions for individual agents and contribution guidelines.

Product

Per-user rate limits for the Buildkite API

The Buildkite REST and GraphQL APIs now rate limit on a per-user basis, so one runaway script can't chew through your organization's entire API quota.

There are two limits, applied together:

- Organization-wide limit: Caps total API usage across all human and machine users in your organization.

- Per-user limit: Caps API usage available to an individual user, with a separate limit for machine users.

If someone hits their per-user cap, only their requests are throttled - everyone else carries on as normal. Machine users can have a separate, higher limit to keep automations humming along.

For existing organizations, we've initially set the per-user limit to match the organization-wide limit, so no users will be limited beyond what your organization-wide limit is currently set to.

Per-user API limits are a powerful tool for reducing the risk of individual users consuming too much of your organization-wide limit and negatively impacting your regular CI/CD operations. When you're ready to improve your controls over user API usage, contact support to enable stricter per-user limits.

New organizations will have both limits set to reasonable levels by default.

Your existing API tokens and integrations will keep working without any changes.

For more details, see the REST API rate limits and GraphQL API rate limits documentation.

Jason

Trigger pipelines from more GitHub events

Buildkite pipelines can now be triggered by a much broader set of GitHub webhook events — not just pushes and pull requests. This makes it easier to migrate GitHub Actions workflows to Buildkite incrementally, without losing triggers along the way.

New event triggers

You can now trigger pipeline builds from:

- Pull request reviews — run builds when a review is submitted or dismissed

- Check runs — react to completed check runs from other GitHub Apps, with built-in loop prevention for Buildkite's own checks

- Releases — trigger builds when a GitHub release is published, created, or released

- Issue comments — kick off builds from PR comments, with configurable command word filtering (supports

exactandcontainsmatch modes) - Pull request review comments — trigger builds from inline diff comments, with the same command word and match mode support (useful for AI assistant triggers like

@claude) - Deployment statuses — build when a deployment status changes

- Branch and tag creation — trigger on

createevents for new branches or tags

Expanded pull request actions

Pull request triggers now support additional actions beyond opened and synchronize:

Builds can now be triggered by edited, reopened, converted_to_draft, review_requested, and dequeued actions, in addition to the existing ready_for_review, labeled, and unlabeled actions.

Disable all webhooks

You can now disable all GitHub webhook-triggered builds for a pipeline with a single button. Select Disable GitHub Webhooks in your pipeline's GitHub settings to stop all webhook processing. Your existing trigger settings are preserved and restored when you re-enable.

Better filtering

GitHub webhook-triggered builds also now include BUILDKITE_GITHUB_EVENT and BUILDKITE_GITHUB_ACTION environment variables, available at runtime and in build.env() conditionals. These are also included as new conditional variables to make it easy to write fine-grained build filters:

build.source_event— the GitHub event type (e.g.push,pull_request,release)build.source_action— the event action (e.g.labeled,opened,submitted)

We've also added build.pull_request.label, which allows for filtering on the specific label that was just added or removed, rather than the whole set of labels.

All the new events expose relevant context as environment variables via build.env() that you can use in pipeline and step conditionals. This allows for more fine-grained filtering before you start running builds and steps.

Learn more about configuring GitHub webhook triggers in our documentation.

Hannah

Search queues within a cluster

You can now search for queues by name on the cluster queues page, making it much faster to find a specific queue when your cluster contains hundreds of them.

- Find queues quickly: Type any part of a queue name to filter the list instantly, with case-insensitive substring matching so you don't need to remember the full name or exact prefix.

- Fast, in-page updates: Results update in place as you search, without full-page reloads, and pagination continues to work across filtered results.

- Scales to large clusters: Built for customers consolidating many queues into a single cluster as part of a cluster migration, where scanning a paginated list is no longer practical.

Chris

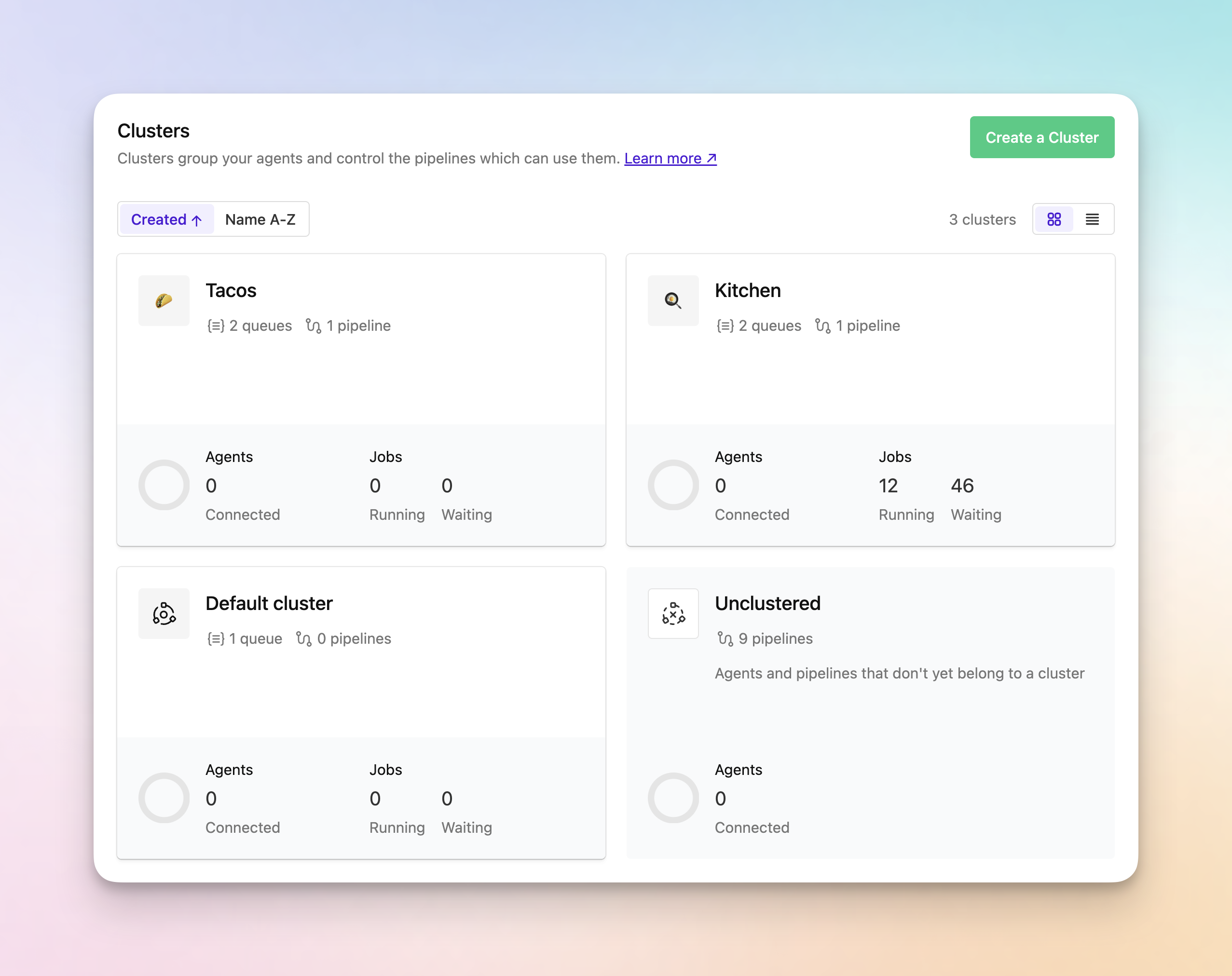

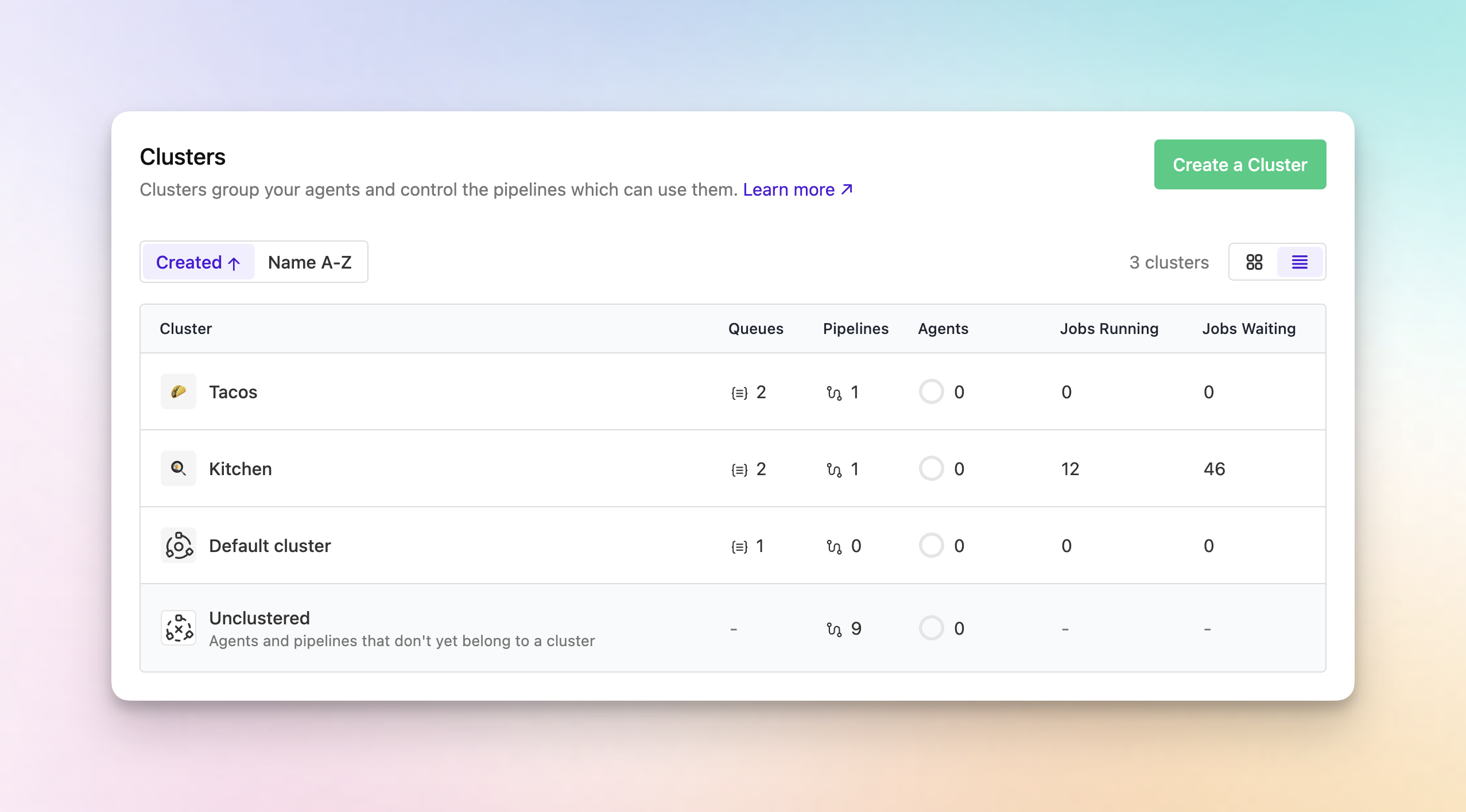

Sort clusters and switch between card and list views

If you manage a lot of clusters, you can now sort the Clusters page alphabetically and switch between the existing card layout and a new list view, making it much easier to find the right cluster without scanning a creation-ordered list.

- Sort by created date or name: Use the new sort controls to keep the default created-date ordering or switch to an alphabetical view when you need to find a cluster quickly.

- Switch between card and list views: Toggle between the familiar card layout and a new table view depending on how you prefer to scan cluster information.

- See more data in one place: The new list view shows cluster name, queues, pipelines, description, connected agents, running jobs, and waiting jobs in a sortable table that's easier to scan at scale.

- Keep your view during refreshes: Your selected sort and view settings are preserved during the page's automatic refresh.

Chris

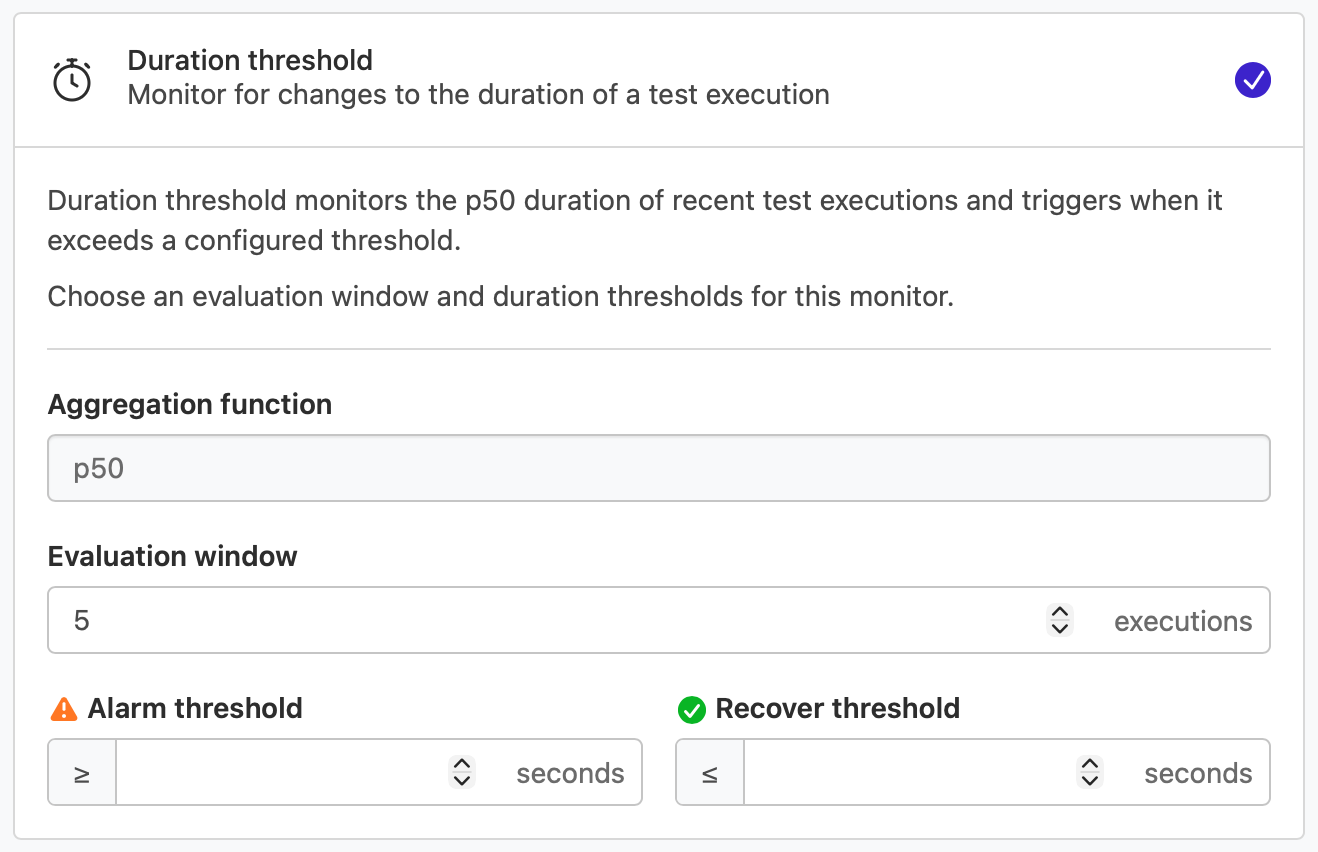

Duration threshold monitor for Test Engine Workflows

You can now catch slow tests before they erode build speed with the Duration threshold monitor in Test Engine Workflows!

The Duration threshold monitor tracks how long individual tests take to run, and triggers when the aggregated duration over a sliding window crosses a configured threshold.

What's new

- Monitor test runtime against a configured threshold using a sliding window

- Full workflow action support including labeling, state management, Slack notifications, and Linear issue tracking

- Tag-based filtering to scope the monitor to specific branches, suites, or test groups

Learn more about the Duration threshold monitor in our documentation.

Naufan

Retry all failed jobs via API

You can now retry all failed jobs in a build with a single API call, matching the behavior of the "Retry Failed Jobs" button in the UI.

REST: POST /organizations/{org}/pipelines/{pipeline}/builds/{number}/retry_failed_jobs

GraphQL: buildRetryFailedJobs mutation

Both return the count of jobs queued for retry.

An optional filter parameter states lets you choose which job states to retry, so you can target exactly the failures that matter:

failed: Jobs that ran and exited with a non-zero statussoft_failed: Jobs that failed but were marked withsoft_fail— the build continues regardlessexpired: Jobs that weren't accepted by an agent before the timeougcanceled: Jobs that were manually or programmatically canceled before completing

By default, all failed states are retried. Pass a filter to narrow it — for example, retry only failed jobs without re-queuing dozens of soft failures that would just add pressure to your queue.

David

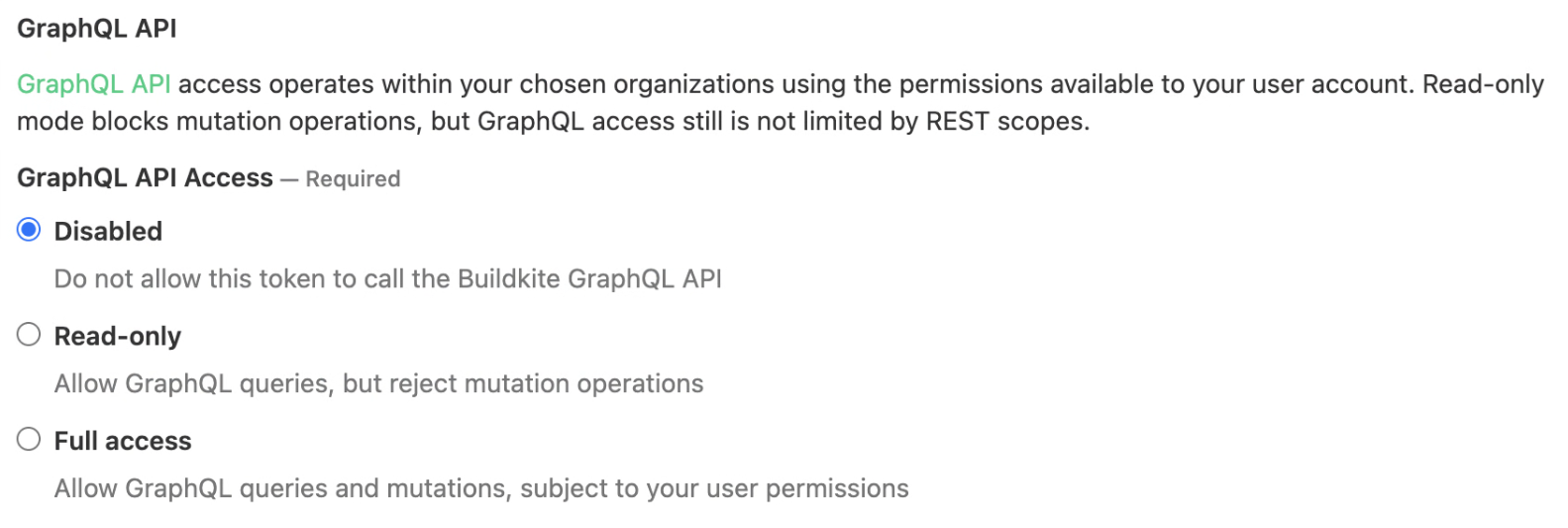

Read-only mode for GraphQL API tokens

User API access tokens now have an explicit GraphQL access mode. You can create tokens that can run GraphQL queries but not mutations.

When creating or editing a user API access token, the GraphQL permission offers three options:

- Disabled: No GraphQL API access

- Read-only: Can run queries, but mutations are rejected

- Full access: Can run both queries and mutations

Key details

- Existing tokens are unaffected: All previously created GraphQL-enabled tokens continue to work with full access.

- Mutation guard: Read-only tokens are blocked before any mutation code runs, so there is no risk of partial side effects.

- Audit visibility: The selected GraphQL access mode is displayed in the token summary and the organization API access audit view.

For more information, see the API access tokens documentation.

Lachlan

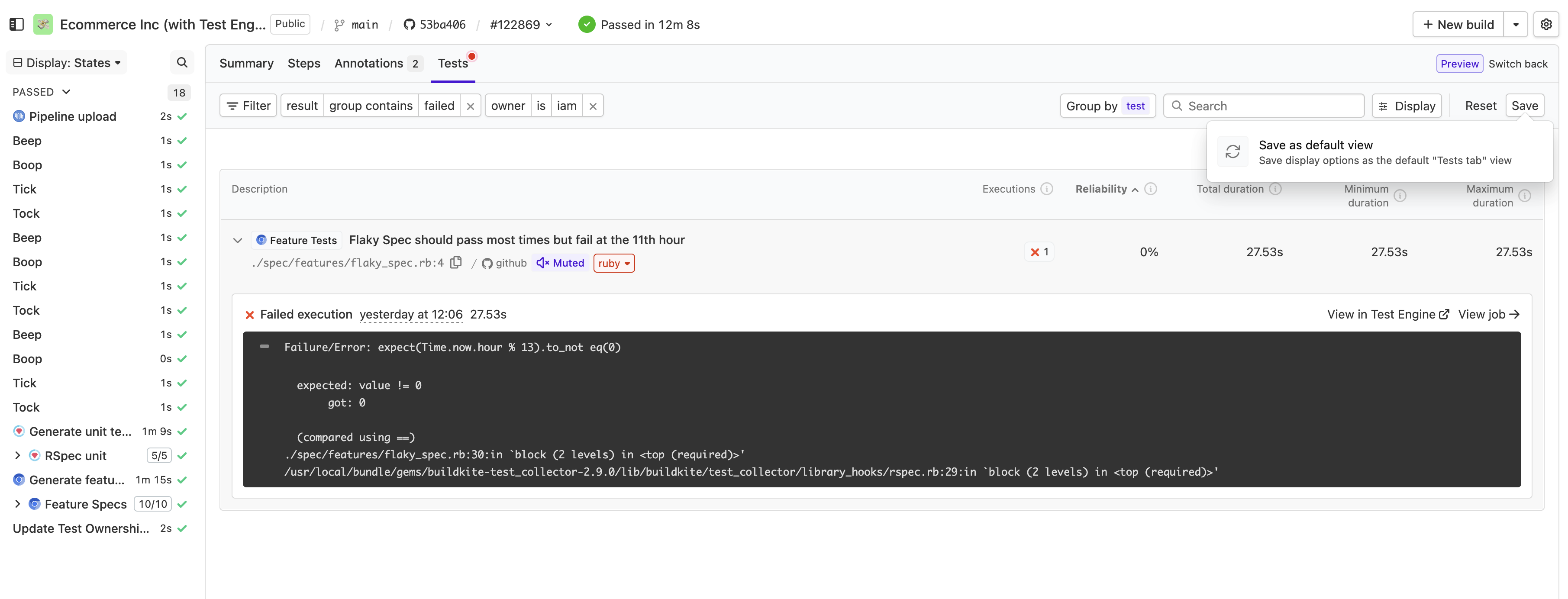

Saved default view for the Tests tab

You can now save a default filtered view directly from the Tests tab. After configuring your filters, save them as your default view so the Tests tab loads with your preferred filters each time you visit.

This makes it easier to:

- Jump straight to the test results that matter most to your team

- Share a consistent, filtered view across your organization

- Reduce time spent reconfiguring filters between visits

Meghan

Streaming job dispatch is now generally available

Agents running buildkite-agent start now receive jobs in sub-second time, with no configuration changes necessary. Agent v3.122.0 replaces the polling-based dispatch with a persistent streaming connection, enabled by default.

What changed

Agent v3.122.0 changes the default agent API endpoint to agent-edge.buildkite.com, a new Go-based edge service that maintains a ConnectRPC stream to each connected agent. When a job is dispatched, it's pushed to agents over this stream rather than waiting for the next poll.

Why this matters

Previously, agents checked for work by polling every 10 seconds, plus random jitter to avoid synchronized requests across fleets. A job dispatched right after a poll could wait up to 20 seconds before that agent checks in again. Across a pipeline with many steps, that wait compounds.

With streaming dispatch, job acceptance latency drops to under 1 second. For an average build, that can save minutes of unnecessary wait time - resulting in faster and more efficient builds.

Availability

This is now the default for all agents running buildkite-agent start, including those on the Elastic CI Stack for AWS.

Buildkite Hosted Agents, Agent Stack for Kubernetes, and workflows using --acquire-job use different dispatch mechanisms and don't benefit from this change.

Upgrade to Agent v3.122.0 to get streaming dispatch by default. We'd love to hear how it goes! Reach out to support@buildkite.com with any feedback.

Daniel

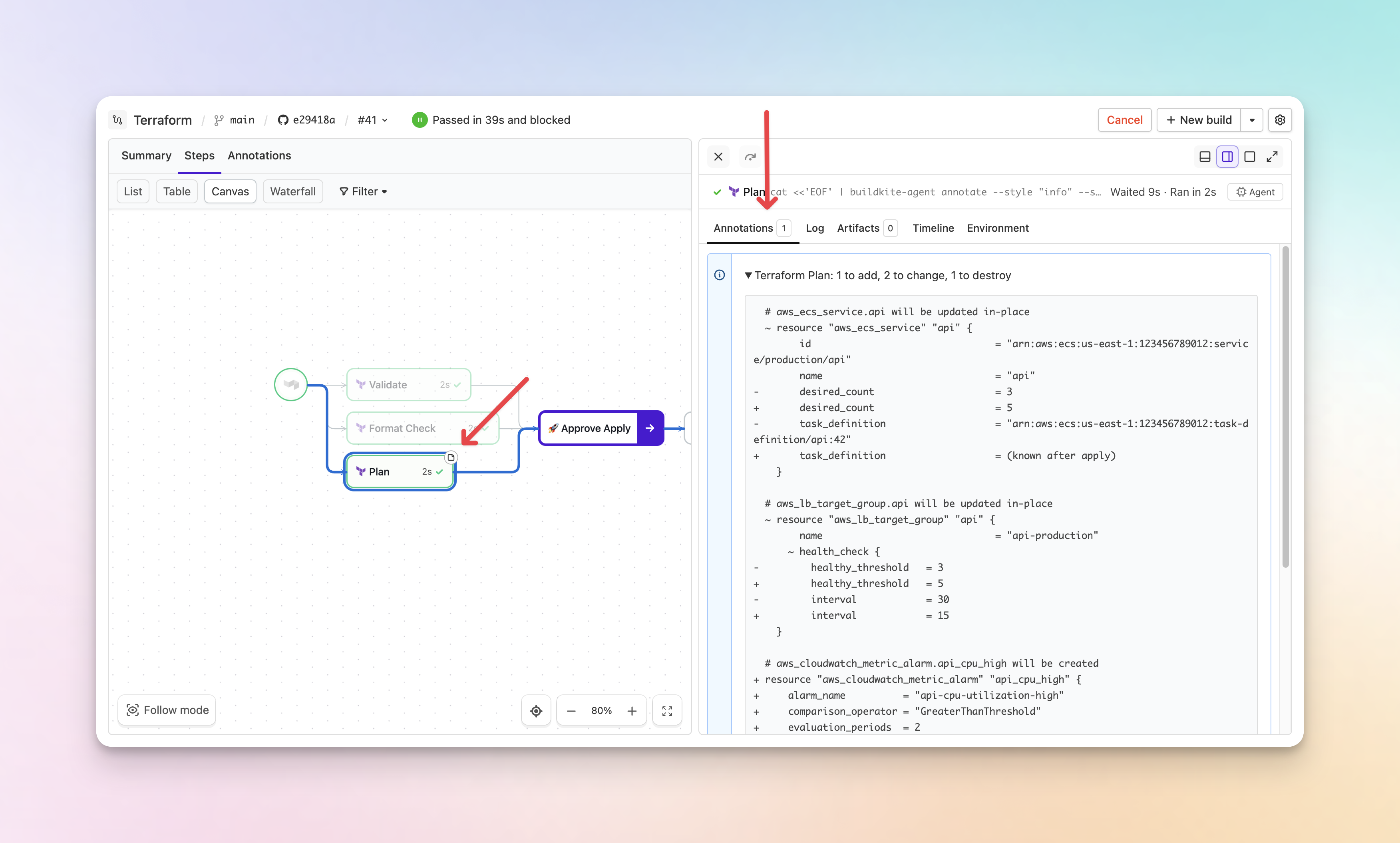

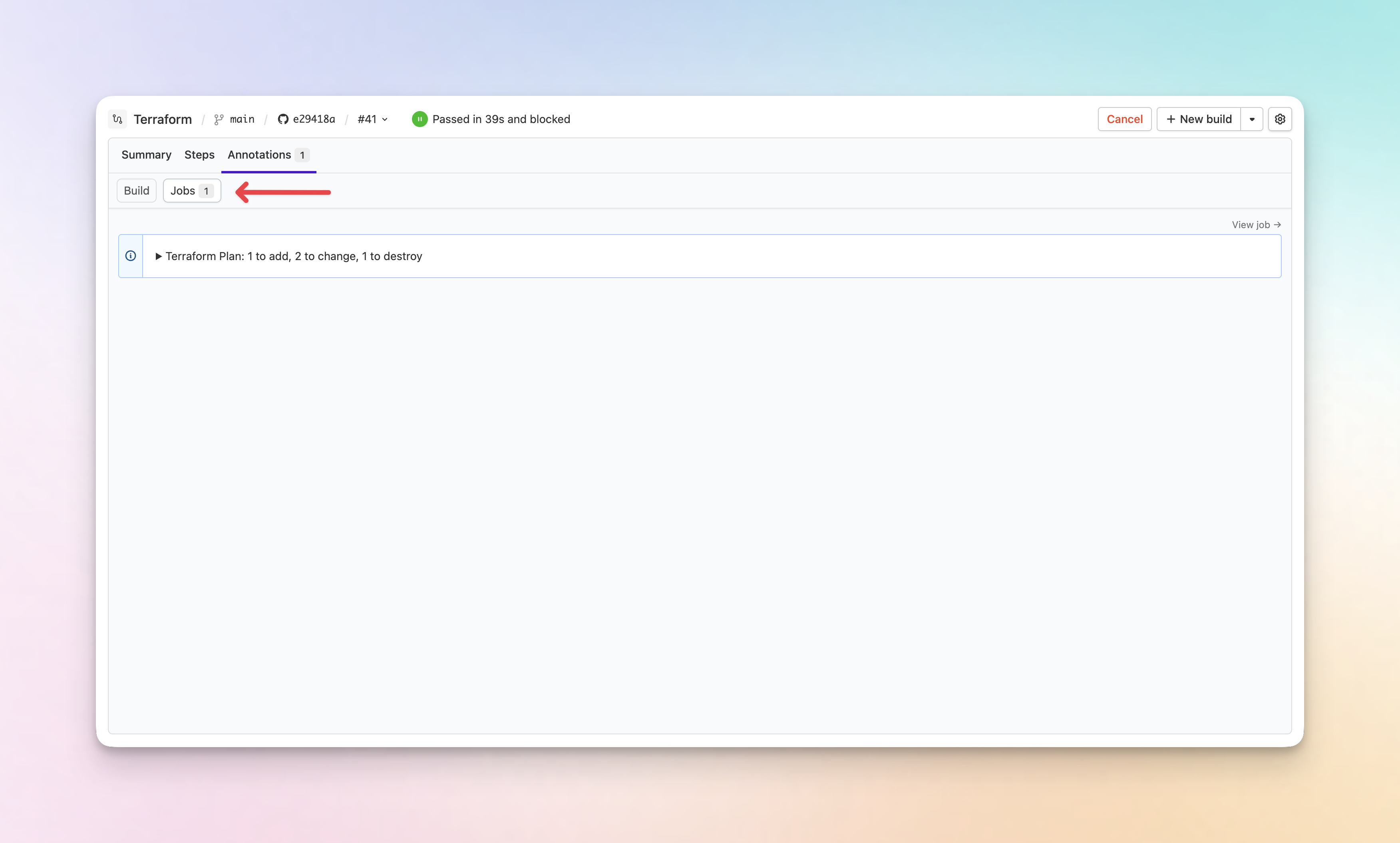

Introducing job annotations

Annotations can now be scoped to individual jobs. Previously, all annotations lived at the build level, but with large builds this meant scrolling through a list of annotations and mentally mapping each one back to its job. Now, test failures, lint errors, etc. can show up next to the job that produced them. Existing annotations are unaffected — the default scope remains build.

🆕 What's new

-

Job-scoped annotations via the agent CLI: Use

buildkite-agent annotate --scope jobto create an annotation that's tied to the current job.buildkite-agent annotate --scope job "3 tests failed — see details below" -

Annotation indicators on jobs: Jobs with annotations show an indicator on the canvas. When you open a job's drawer, its annotations are available in a dedicated Annotations tab.

-

Annotations tab with build and job sub-tabs: The Annotations tab now has separate Build and Jobs sub-tabs, with links to jump to the originating job.

-

Full API support: Job annotations are fully supported in both the REST API and GraphQL API, including create, read, and delete operations.

-

Real-time updates: Annotations update in real-time as your build runs — no page refresh needed.

Job-scoped annotations require Buildkite Agent v3.112 or newer and are not available in the classic build page experience. For more information, see the annotations documentation.

Chris

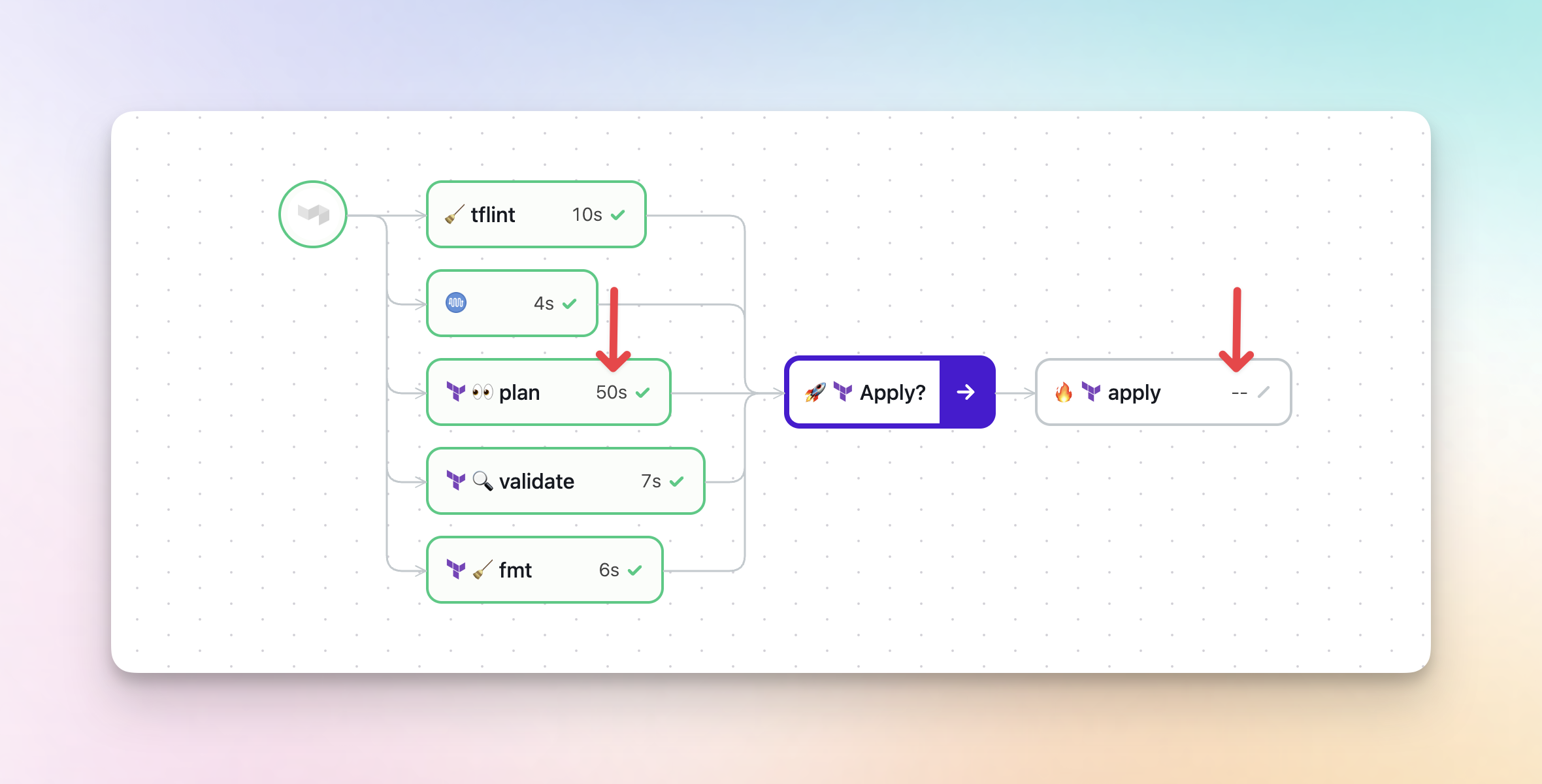

Run durations on canvas nodes

Steps on the build canvas now display their run duration directly on each node, so you can quickly spot slow steps and see the progress of running jobs without clicking into them. Steps that haven't started yet show a -- placeholder, so you can see at a glance which parts of your build are still waiting to run.

Chris

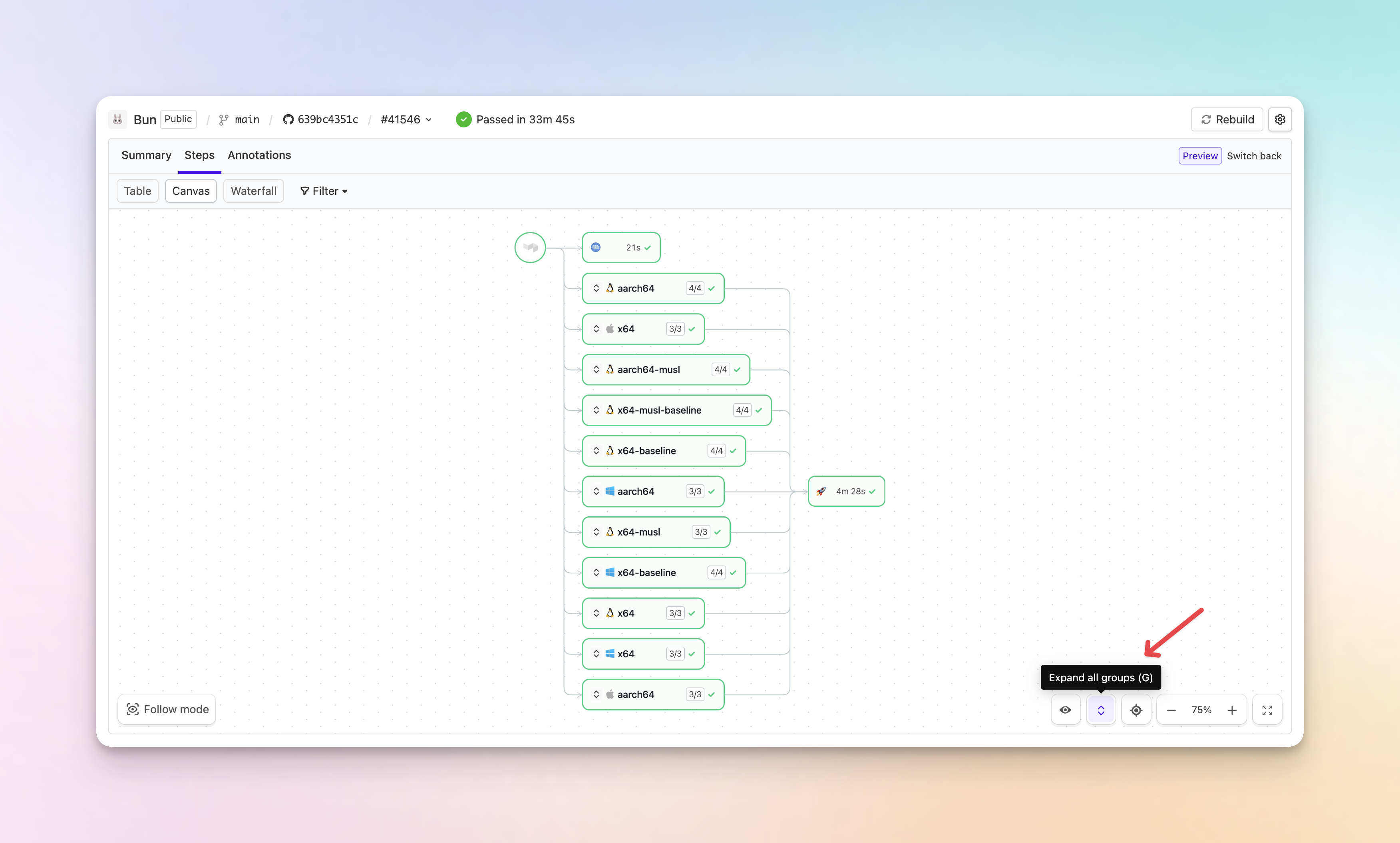

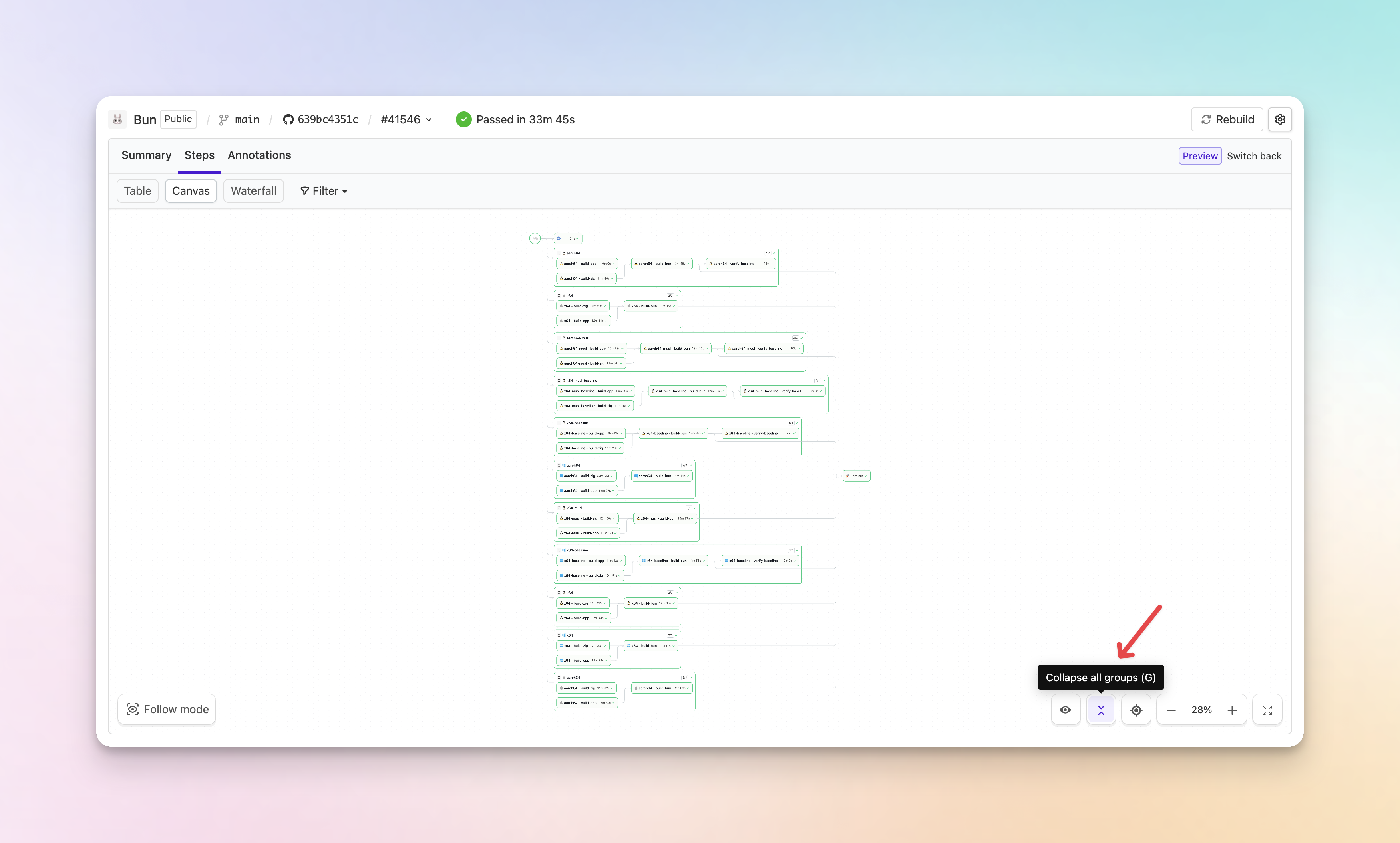

Collapsed group steps on the build canvas

Group steps on the build canvas are now collapsed by default, making complex dependency graphs much easier to follow at a glance.

- Collapsed by default: Group steps are now collapsed when you open a build, reducing visual noise and improving page performance on large pipelines.

- Expand and collapse all: A new button on the canvas lets you expand or collapse all group steps at once. You can also press G to toggle.

- Auto-expand on failure or input: Groups containing failed steps or steps waiting for user input are automatically expanded, so you never miss something that needs your attention.

You can still expand or collapse individual group steps by clicking on them.

Chris

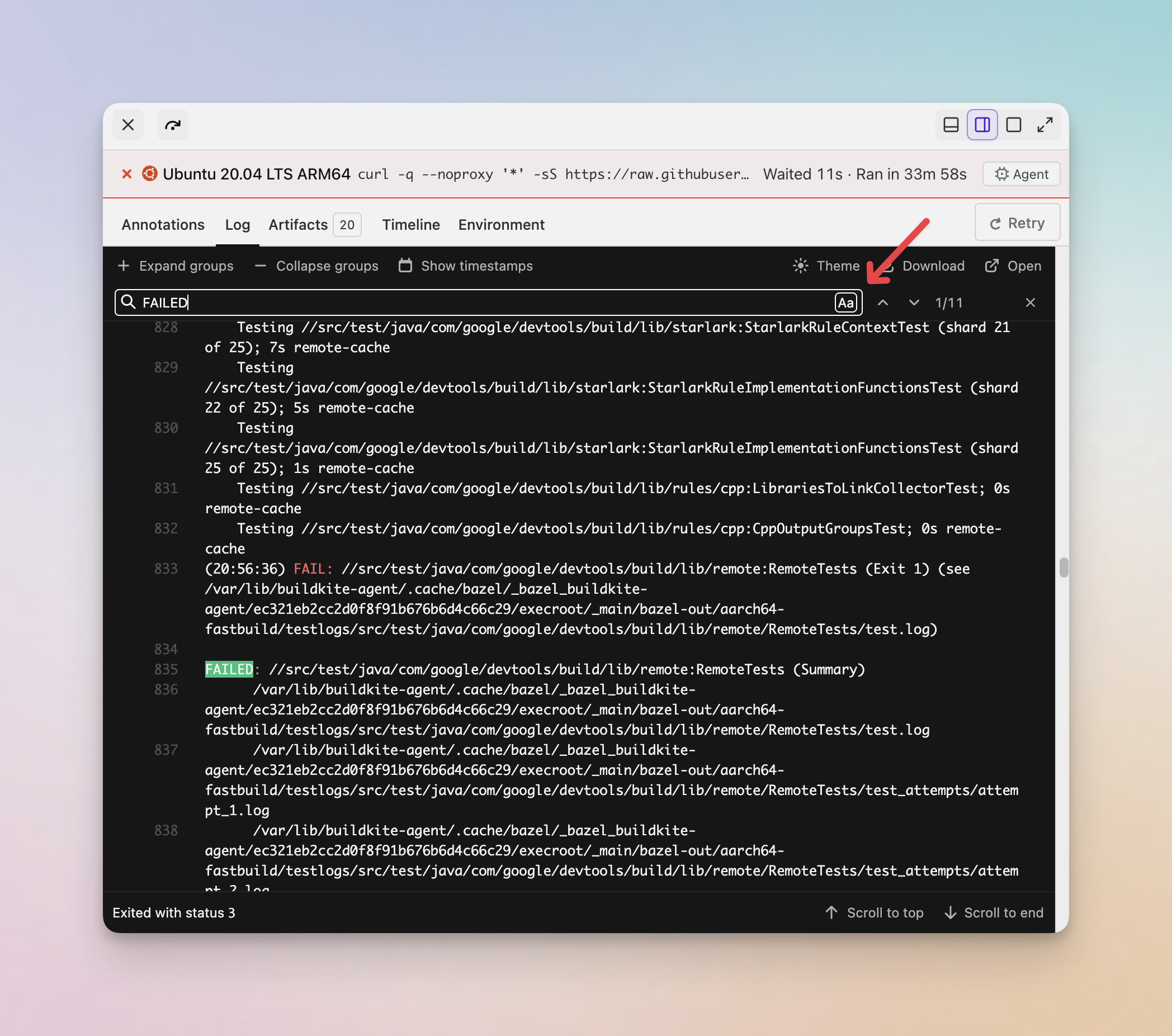

Case-sensitive search in build job logs

You can now toggle case-sensitive matching when searching through job logs on the new build page. Click the Aa button in the log search bar to switch between case-sensitive and case-insensitive search.

Chris

Buildkite MCP Server security and usability improvements

The Buildkite MCP Server now sanitizes all tool responses to protect agents from prompt injection attacks. Build logs, pipeline names, commit messages, and annotations are passed through a multi-stage sanitization pipeline before reaching the agent — filtering invisible characters, stripping control sequences, sanitizing HTML, and neutralizing LLM delimiter tokens.

The list_builds tool no longer requires a pipeline_slug. When omitted, it queries across the entire organization, making it easier for agents to find failing builds without needing to call the tool once per pipeline.

When the Buildkite API returns an authentication error, the MCP server now surfaces it as a proper 401 response rather than a generic tool error. This means MCP clients and agents can correctly prompt you to re-authenticate instead of failing with a cryptic message.

A new --max-log-bytes flag (and BKLOG_MAX_LOG_BYTES environment variable) lets you cap how much log data the server downloads per request, defaulting to 100 MB. This gives you more control over memory consumption when working with large pipeline logs.

These improvements apply to both the open-source MCP server and the Remote MCP server.

Mark

Per-user rate limiting for the Buildkite MCP server

The Buildkite Remote MCP server now rate limits on a per-user basis, so one person can't chew through your organization's entire API quota.

If you've been hesitant to roll out the remote MCP server broadly, this should help. Each user gets their own limit of 50 requests per minute, so you can invite your whole team to connect their AI coding assistants to Buildkite without worrying about one heavy user blocking everyone else.

There's nothing to configure, per-user limits apply automatically. If someone hits their limit, only their requests are throttled. Everyone else carries on as normal.

For more on API rate limits across Buildkite, see the Remote MCP server limits documentation.

Himal

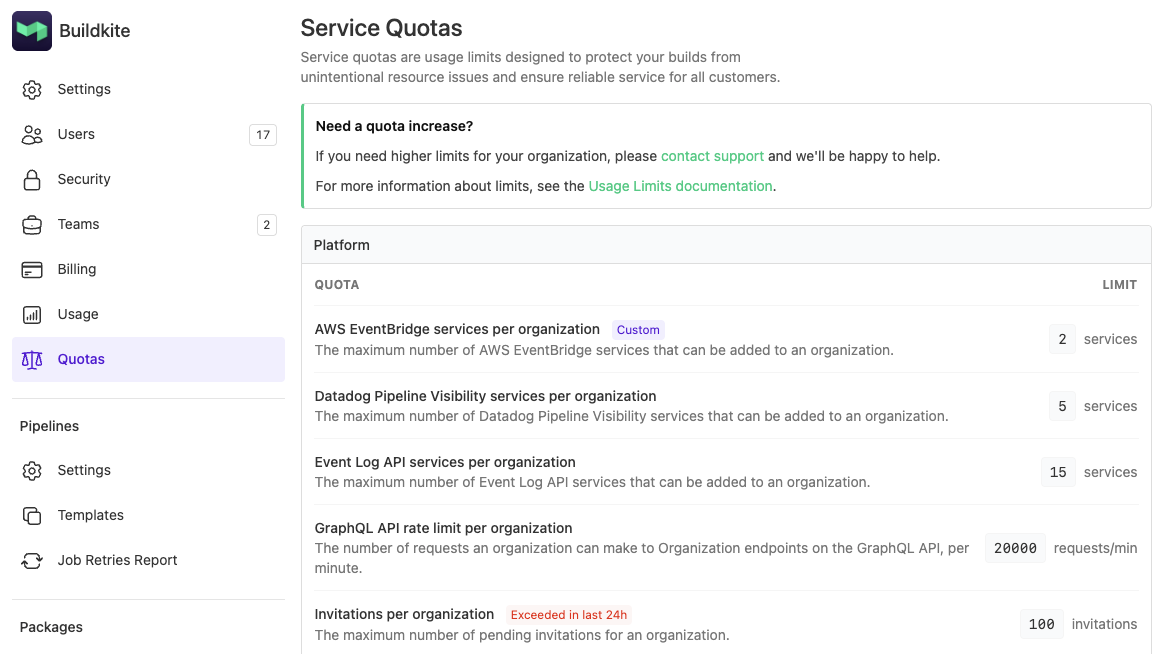

View your organization's service quotas

Organization administrators can now view the service quotas that apply to their organization directly in Buildkite. Head to Settings > Quotas to see your actual limits, broken down by product area.

Each quota shows the effective limit for your organization. A Custom badge indicates a limit that differs from the default for your plan, and an Exceeded in last 24h badge flags any quotas your organization has hit recently.

If you need a quota increased, contact support@buildkite.com or reach out to your Technical Account Manager.

For the full list of default limits, see the Limits documentation.

Grant

Improving authentication with the CLI

We've been busy making changes to the way the Buildkite CLI can authenticate against organizations. We've implemented some features, as well as improvements to security so folks can feel safer in giving the CLI assess to their Buildkite organization.

OAuth availability

The CLI can now be authenticated via OAuth, with permissions scoped within the user's own accepted access. The permissions can also be customized to meet your needs for CLI use.

A single auth family

We've introduced an auth command, with various subcommands, to ensure all things authentication can be found in a logical spot. whoami and use can still be used, for now, performing the same actions as their new subcommands, bk auth status and bk auth switch, respectively.

We've also implemented bk auth login, replacing configure add, and bk auth logout, a new command which will clear keychain credentials for the current authenticated organization, or all keychain configurations with the --all flag.

No more text-based tokens

The CLI, until now, has been writing API tokens to a config file, typically at ~/.config/bk.yaml, which is convenient but a pattern we've wanted to move away from. As of v3.32.0, we'll no longer write to this file, only read from it. API tokens will be encrypted and stored in the OS keychain exclusively.

The CLI will fall back to reading bk.yaml files, so if those are in use, or you wish to continue using them, you can, you'll just see a warning in the terminal when you do. If you do wish to store API tokens in your bk.yaml file, you can manually adjust the file;

organizations:

my-cool-org:

api_token: bkua_hunter28265989387498729792378A lot to take in? Read the Buildkite CLI documentation

Ben

Start turning complexity into an advantage

Create an account to get started for free.